Archive

My 2025 in software engineering

Unrelenting talk of LLMs now infests all the software ecosystems I frequent.

- Almost all the papers published (week) daily on the Software Engineering arXiv have an LLM themed title. Way back when I read these LLM papers, they seemed to be more concerned with doing interesting things with LLMs than doing software engineering research.

- Predictions of the arrival of AGI are shifting further into the future. Which is not difficult given that a few years ago, people were predicting it would arrive within 6-months. Small percentage improvements in benchmark scores are trumpeted by all and sundry.

- Towards the end of the year, articles explaining AI’s bubble economics, OpenAI’s high rate of loosing money, and the convoluted accounting used to fund some data centers, started appearing.

Coding assistants might be great for developer productivity, but for Cursor/Claude/etc to be profitable, a significant cost increase is needed.

Will coding assistant companies run out of money to lose before their customers become so dependent on them, that they have no choice but to pay much higher prices?

With predictions of AGI receding into the future, a new grandiose idea is needed to fill the void. Near the end of the year, we got to hear people who must know it’s nonsense claiming that data centers in space would be happening real soon now.

I attend one or two, occasionally three, evening meetups per week in London. Women used to be uncommon at technical meetups. This year, groups of 2–4 women have become common in meetings of 20+ people (perhaps 30% of attendees); men usually arrive individually. Almost all women I talked to were (ex) students looking for a job; this was also true of the younger (early 20s) men I spoke to. I don’t know if attending meetups been added to the list of things to do to try and find a job.

Tom Plum passed away at the start of the year. Tom was a softly spoken gentleman whose company, PlumHall, sold a C, and then C++, compiler validation suite. Tom lived on Hawaii, and the C/C++ Standard committees were always happy to accept his invitation to host an ISO meeting. The assets of PlumHall have been acquired by Solid Sands.

Perennial was the other major provider of C/C++ validation suites. It’s owner, Barry Headquist, is now enjoying his retirement in Florida.

The evidence-based software engineering Discord channel continues to tick over (invitation), with sporadic interesting exchanges.

What did I learn/discover about software engineering this year?

Software reliability research is a bigger mess than I had previously thought.

I now regularly use LLMs to find mathematical solutions to my experimental models of software engineering processes. Most go nowhere, but a few look like they have potential (here and here and here).

Analysis/data in the following blog posts, from the last 12-months, belongs in my book Evidence-Based Software Engineering, in some form or other (2025 was a bumper year):

Naming convergence in a network of pairwise interactions

Lifetime of coding mistakes in the Linux kernel

Decline in downloads of once popular packages

Distribution of method chains in Java and Python

Modeling the distribution of method sizes

Distribution of integer literals in text/speech and source code

Percentage of methods containing no reported faults

Half-life of Open source research software projects

Positive and negative descriptions of numeric data

Impact of developer uncertainty on estimating probabilities

After 55.5 years the Fortran Specialist Group has a new home

When task time measurements are not reported by developers

Evolution has selected humans to prefer adding new features

One code path dominates method execution

Software_Engineering_Practices = Morals+Theology

Long term growth of programming language use

Deciding whether a conclusion is possible or necessary

CPU power consumption and bit-similarity of input

Procedure nesting a once common idiom

Functions reduce the need to remember lots of variables

Remotivating data analysed for another purpose

Half-life of Microsoft products is 7 years

How has the price of a computer changed over time?

Deep dive looking for good enough reliability models

Apollo guidance computer software development process

Example of an initial analysis of some new NASA data

Extracting information from duplicate fault reports

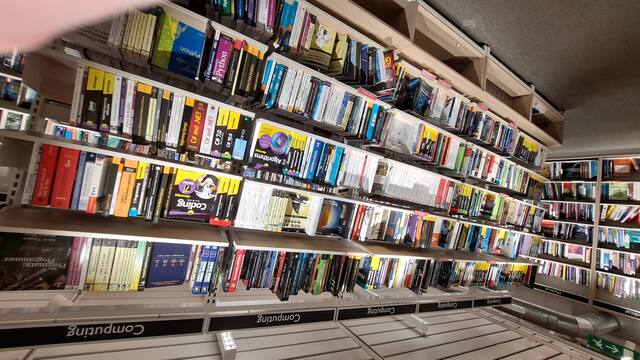

I visited Foyles bookshop on Charing cross road during the week (if you’re ever in London, browsing books in Foyles is a great way to spend an afternoon).

Computer books once occupied almost half a floor, but is now down to five book cases (opposite is statistics occupying one book case, and the rest of mathematics in another bookcase):

Around the corner, Gender Studies and LGBTQ+ occupies seven bookcases (the same as last year, as I recall):

C compiler conformance testing: with ChatGPT assistance

How can developers check that a compiler correctly implements all the behavior requirements contained in the corresponding language specification?

An obvious approach is to write lots of test cases for each distinct behavior; such a collection of tests is known as a validation suite, when used by a standard’s organization to test compilers/OS interfaces/etc. The extent to which a compiler’s behavior, when fed these tests, matches that listed in the language specification is a measure of its conformance.

In a world of many compilers with significant differences in behavior (i.e., pre-Open source), it makes economic sense for governments to sponsor the creation of validation suites, and/or companies to offer such suites commercially (mainly for C and C++). The spread of Open source compilers decimated compiler diversity, and compiler validation is fading into history.

New features continue to be added to Cobol, Fortran, C, and C++ by their respective ISO Standard’s committee. If governments are no longer funding updates to validation suites and the cost of commercial suites is too high for non-vendors (my experience is that compiler vendors find them to be cost-effective), how can developers check that a compiler conforms to the behavior specified by the Standard?

How much effort is required to create some minimal set of compiler conformance tests?

C is the language whose requirements I am most familiar with. The C Standard specifies that a conforming compiler issue a diagnostic for a violation of a requirement appearing in a Constraint clause, e.g., “For addition, either both operands shall have arithmetic type, or …”

There are 80 such clauses, containing around 530 non-blank lines, in N3301, the June 2024 draft. Let’s say 300+ distinct requirements, requiring a minimum of one test each. Somebody very familiar with the C Standard might take, say, 10 minutes per test, which is 3,000 minutes, or 50 hours, or 6.7 days; somebody slightly less familiar might take, say, at least an hour, which is 300+ hours, or 40+ days.

Lots of developers are using LLMs to generate source code from a description of what is needed. Given Constraint requirements in the C Standard, can an LLM generate tests that do a good enough job checking a compiler’s conformance to the C Standard?

Simply feeding the 157 pages from the Language chapter of the C Standard into an LLM, and asking it to generate tests for each Constraint requirement does not seem practical with the current state of the art; I’m happy to be proved wrong. A more focused approach might produce the desired tests.

Negative tests are likely to be the most challenging for an LLM to generate, because most publicly available source deals with positive cases, i.e., it is syntactically/semantically correct. The wording of Constraints sometimes specifies what usage is not permitted (e.g., clause 6.4.5.3 “A floating suffix df, dd, dl, DF, DD, or DL shall not be used in a hexadecimal floating literal.”), other times specifies what usage is permitted (e.g., clause 6.5.3.4 “The first operand of the . operator shall have an atomic, qualified, or unqualified structure or union type, and the second operand shall name a member of that type.”), or simply specifies a requirement (e.g., clause 6.7.3.2 “A member declaration that does not declare an anonymous structure or anonymous union shall contain a member declarator list.”).

I took the text from the 80 Constraint clauses, removed footnote numbers and rejoined words split at line-breaks. The plan was to prefix the text of each Constraint with instructions on the code requires. After some experimentation, the instructions I settled on were:

Write a sequence of very short programs which tests that a C compiler correctly flags each violation of the requirements contained in the following excerpt from the latest draft of the C Standard: |

Initially, excerpt was incorrectly spelled as except, but this did not seem to have any effect. Perhaps this misspelling is sufficiently common in the training data, that LLM weights support the intended association.

Experiments using Grok and ChatGPT 4o showed that both generated technically correct tests, but Grok generated code that was intended to be run (and was verbose), while the ChatGPT 4o output was brief and to the point; it did such a good job that I did not try any other LLMs. For this extended test, use of the web interface proved to be an effective approach. Interfacing via the API is probably more practical for larger numbers of requirements.

After some experimentation, I submitted the text from 31 Constraint clauses (I picked the non-trivial ones). The complete text of the questions and ChatGPT 4o responses (text files).

ChatGPT sometimes did not generate tests for all the requirements, when these were presented as they appeared in the Constraint, but did generate tests when the containing sentence was presented in isolation from other requirement sentences. For instance, the following sentence from clause 6.5.5 Cast Operators:

Conversions that involve pointers, other than where permitted by the constraints of 6.5.17.2, shall be specified by means of an explicit cast. |

was ignored when included as part of the complete Constraint, but when presented in isolation, reasonable tests were generated.

The responses never contained more than 10 test cases. I am guessing that this is the result of limits on response cpu time/length. Dividing the text of longer Constraints should solve this issue.

Some assumptions made by ChatGPT 4o about the implementation can be deduced from its responses, e.g., it appears to treat the type short as containing fewer than 32-bits (it assumes that a bit-field defined as a short containing 32-bits will be treated as a Constraint violation). This is not surprising, given the volume of public C source targeting the Intel x86.

I was impressed by the quality of the 242 test cases generated by ChatGPT 4o, which often included multiple tests for the same requirement (text files).

While it sometimes failed to produce a test for a requirement, I did not spot any incorrect tests (as in, not correctly testing for a violation of a listed requirement); the subset of tests feed through behaved as claimed), and I eventually found a prompt that appears to be creating a downloadable zip file of all the tests (most prompts resulted in a zip file containing some collection of 10 tests); the creation process is currently waiting for available cpu time. I now know that downloading a zip file containing one file per test, after each user prompt, is the more reliable option.

Compiler validation is now part of history

Compiler validation makes sense in a world where there are many different hardware platforms, each with their own independent compilers (third parties often implemented compilers for popular platforms, competing against the hardware vendor). A large organization that spends hundreds of millions on a multitude of computer systems (e.g., the U.S. government) wants to keep prices down, which means the cost of porting its software to different platforms needs to be kept down (or at least suppliers need to think it will not cost too much to switch hardware).

A crucial requirement for source code portability is that different compilers be able to compile the same source, generating code that produces the same behavior. The same behavior requirement is an issue when the underlying word-size varies or has different alignment requirements (lots of code relies on data structures following particular patterns of behavior), but management on all sides always seems to think that being able to compile the source is enough. Compilers vendors often supported extensions to the language standard, and developers got to learn they were extensions when porting to a different compiler.

The U.S. government funded a conformance testing service, and paid for compiler validation suites to be written (source code for what were once the Cobol 85, Fortran 78 and SQL validation suites). While it was in business, this conformance testing service was involved C compiler validation, but it did not have to fund any development because commercial test suites were available.

The 1990s was the mass-extinction decade for companies selling non-Intel hardware. The widespread use of Open source compilers, coupled with the disappearance of lots of different cpus (porting compilers to new vendor cpus was always a good money spinner, for the compiler writing cottage industry), meant that many compilers disappeared from the market.

These days, language portability issues have been essentially solved by a near monoculture of compilers and cpus. It’s the libraries that are the primary cause of application portability problems. There is a test suite for POSIX and Linux has its own tests.

There are companies selling compiler C/C++ test suites (e.g., Perennial and PlumHall); when maintaining a compiler, it’s cost-effective to have a set of third-party tests designed to exercise all the language.

The OpenGroup offer to test your C compiler and issue a brand certificate if it passes the tests.

Source code portability requires compilers to have the same behavior and traditionally the generally accepted behavior has been defined by an ISO Standard or how one particular implementation behaved. In an Open source world, behavior is defined by what needs to be done to run the majority of existing code. Does it matter if Open source compilers evolve in a direction that is different from the behavior specified in an ISO Standard? I think not, it makes no difference to the majority of developers; but be careful, saying this can quickly generate a major storm in a tiny teacup.

C compiler validation is 21 today!

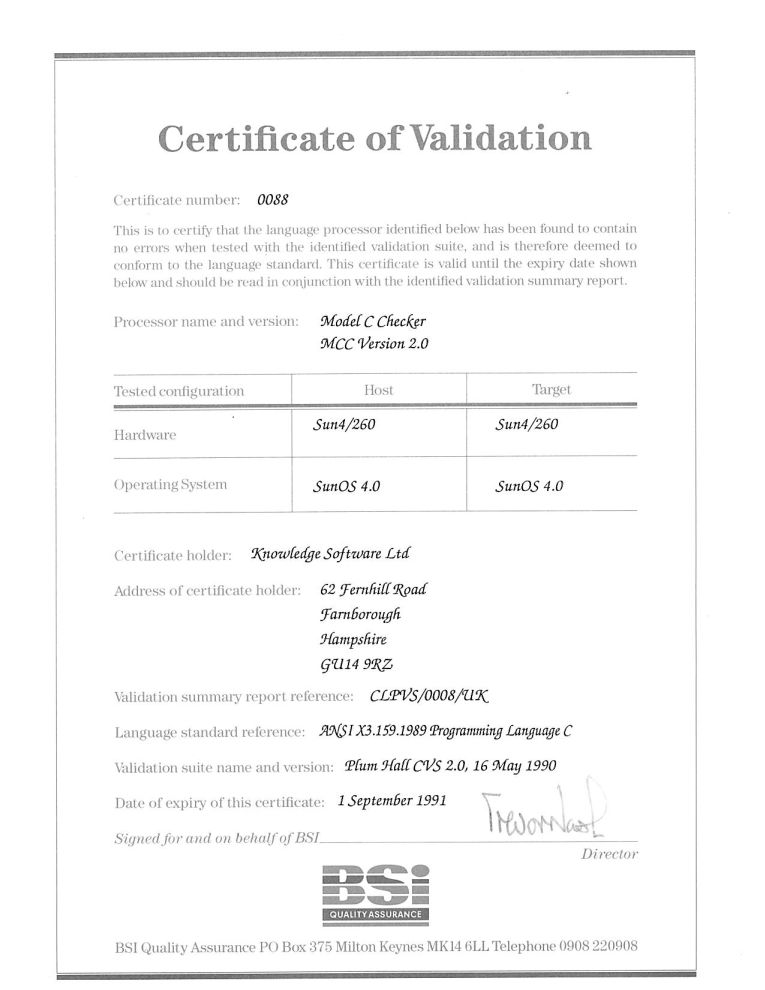

Today, 1 September 2011, is the 21th anniversary of the first formally validated C compilers. The three ‘equal first’ validated compilers were the Model Implementation C Checker from Knowledge Software, Topspeed C from JPI (run by the people who created Turbo Pascal) and the INMOS C compiler (derived from the Norcroft C compiler written by Alan Mycroft+others, the author of the longest response document seen during the review of the C89 draft standard).

Back in the day the British Standards Institution testing group run by John Souter were the world leaders in compiler validation and were very proactive in adding support for a new language. NIST, the equivalent US body, did not offer such a service until a few years later. Those companies in a position to have their compilers validated (i.e., the compiler passed the validation suite) were pressing BSI to be first; the ‘who is first’ issue was resolved by giving all certificates the same date (the actual validation process of a person from BSI, Neil Martin now Director of Test in the Winterop Team at Microsoft, turning up to ‘witness’ the compiler passing the tests happened several weeks earlier).

Testing C compilers was different from other language compilers in that sufficient demand existed to support commercial production and maintenance of test suites (the production of validation suites for previous language compilers had been government funded). After a review of the available test suites BSI chose to use the Plum Hall suite; after a similar review NIST chose to use the Perennial suite (I got involved in trying to figure out for NIST how well this suite covered the requirements contained in the C Standard).

For a while C compiler validation was big business (as in big fish, very small pond). But the compiler validation market is dependent on there being lots of compilers, which requires market fragmentation and to a lesser extent lots of different OSs and hardware platforms (each needing a separate validation). The 1990s saw market consolidation, gcc becoming good enough for commercial use and a shift of developer mind share to C++. Dwindling revenue resulted in BSI’s compiler validation group being shut down after a few years and NIST’s followed in 1998.

Is compiler validation relevant today? When the first C Standard was published a lot of compilers in common use had some significant behavioural differences compared to what the Standard specified. Over time these compilers have either disappeared or been upgraded (a potential customer once asked me the benefits I saw in them licensing the Knowledge Software front end and the reply to one of my responses, “you can tell your customers that the compiler is standard’s compliant”, was that this was not a benefit as they had been claiming this for years). Improvements in Intel’s x86 processor also had a hand in improving compiler Standard’s conformance; the various memory models used by the x86 processor was a huge headache for compiler writers whose products often behaved very differently under different memory models; the arrival of the Pentium with its flat 32-bit address space meant this issue disappeared over time.

These days I suspect that the major compilers targeting platforms where portability is expected (portability is often not a big expectation in the embedded world) are sufficiently compatible that developers are willing to overlook small differences with the Standard. Differences in third party libraries, GUIs and other frameworks have been the big headache for many years now.

Would the ‘platform portability’ compilers, that’s probably gcc, Microsoft, products using EDG’s front end, and perhaps llvm in the coming years, pass the latest version of the PlumHall and Perennial suites?

- The gcc team do not have access to either company’s suite. The gcc regression tests are a poor substitute for a proper compiler validation suite (even though they cost many thousands of dollars commercial compiler writers often buy both companies products because they are good value for money as a testing resource {the Fortran 78 validation suite source gives some idea of how much work is actually involved). I would expect gcc to fail some of the tests but have no idea how many or serious the failures would be.

- Microsoft have said they don’t have plans to support C99 (it took a lot of prodding to get them interested in formally validating against C90).

- I think the llvm team are in the same position as gcc, but perhaps somebody at Apple has access to one or more of the commercial suites (I don’t know).

- EDG are into standard’s conformance and I would expect them to pass both suites.

The certificate is printed on high quality, slightly yellow paper; the template wording is in a subdued gray ink while the customer information is in a very bold black ink. I don’t know whether this is to make life difficult for counterfeiters, but I could not get any half decent photographs and the color scanner had to be switched to black&white.

Validation was good for one year and I saw no worthwhile benefit in paying BSI £5,000 to renew for another year. Few people knew about the one year rule and I did not enlighten them. In the Ada compiler market the one year rule was a major problem, but lets leave that for another time.

Estimating the quality of a compiler implemented in mathematics

How can you tell if a language implementation done using mathematical methods lives up to the claims being made about it, without doing lots of work? Answers to the following questions should give you a good idea of the quality of the implementation, from a language specification perspective, at least for C.

- How long did it take you to write it? I have yet to see any full implementation of a major language done in less than a man year; just understanding and handling the semantics, plus writing the test cases will take this long. I would expect an answer of at least several man years

- Which professional validation suites have you tested the implementation against? Many man years of work have gone into the Perennial and PlumHall C validation suites and correctly processing either of them is a non-trivial task. The gcc test suite is too light-weight to count. The C Model Implementation passed both

- How many faults have you found in the C Standard that have been accepted by WG14 (DRs for C90 and C99)? Everybody I know who has created a full implementation of a C front end based on the text of the C Standard has found faults in the existing wording. Creating a high quality formal definition requires great attention to detail and it is to be expected that some ambiguities/inconsistencies will be found in the Standard. C Model Implementation project discoveries include these and these.

- How many ‘rules’ does the implementation contain? For the C Model Implementation (originally written in Pascal and then translated to C) every if-statement it contained was cross referenced to either a requirement in the C90 standard or to an internal documentation reference; there were 1,327 references to the Environment and Language clauses (200 of which were in the preprocessor and 187 involved syntax). My C99 book lists 2,043 sentences in the equivalent clauses, consistent with a 70% increase in page count over C90. The page count for C1X is around 10% greater than C99. So for a formal definition of C99 or C1X we are looking for at around 2,000 language specific ‘rules’ plus others associated with internal housekeeping functions.

- What percentage of the implementation is executed by test cases? How do you know code/mathematics works if it has not been tested? The front end of the C Model Implementation contains 6,900 basic blocks of which 87 are not executed by any test case (98.7% coverage); most of the unexecuted basic blocks require unusual error conditions to occur, e.g., disc full, and we eventually gave up trying to figure out whether a small number of them were dead code or just needed the right form of input (these days genetic programming could be used to help out and also to improve the quality of coverage to something like say MC/DC, but developing on a PC with a 16M hard disc does limit what can be done {the later arrival of a Sun 4 with 32M of RAM was mind blowing}).

Other suggested questions or numbers applicable to other languages most welcome. Some forms of language definition do not include a written specification, which makes any measurement of implementation conformance problematic.

Proving software correct

Users want confidence that software is ‘correct’; what constitutes correct depends on who you talk to and can vary between doing what the user expects and behaving according to a specification (which may include behavior that users did not expect or want).

The gold standard for software correctness is that achieved by mathematical proofs, or at least what most people believe is achieved by such proofs, i.e., a statement that is shown through a sequence of steps to be derived from a set of axioms. The sequence of steps used in most real proofs operate at a much higher level than axioms and rely on the reader to fill in the gaps left between each step. Ever since theorems were first stated they sometimes contained faults, i.e., were not correct theorems, and as mathematicians have continued to increase the size and complexity of theorems being ‘proved’ the technical and social issues involved in believing a published proof have grown in complexity.

Software proofs usually operate by translating the source in to some mathematical formalism and using a theorem prover to show that one or more properties are met. Perhaps the most famous use of such a proof that had an outcome different than that predicted is the 1996 Ariane 5 rocket crash; various proofs had been obtained for the Ariane 4 software showing that the value of some variables would never exceed given limits, these proofs involved input values that depended on the performance of the rocket and because Ariane 5 was more powerful than Ariane 4 the proofs were no longer valid (management would have found this out had they recheck the proofs using the larger values). Update: My only knowledge of this work comes from a conversation I recall with somebody working in the formal verification area, I no longer have contact with them and the company they worked for no longer exists; Pascal Cuoq’s comment below suggests they may have overstated the formal nature of the work, I have no means of double checking.

Purveyors of ‘software proof’ systems will tell you about the importance of feeding in the correct input values and will tell you about the known proofs they have managed to verify using their system. The elephant in the room that rarely gets mentioned is the correctness of the program that translates source code into the mathematical formalism used. These translators often handle that subset of the language which is relatively easy to map to the target formalism, the MALPAS C to IL translator is one exception to this (ok, yes my company wrote this translator so the opinion might be a little biased).

The method commonly associated with claims of correctness proof for a translator or compiler is slightly different from that described above for applications. This method involves manually writing some mathematics, using the chosen formalism, that ‘implements’ the translator/compiler. Strangely there are people who think that doing this is sufficient to claim the compiler is ‘verified’ or ‘proved correct’. As any schoolboy knows it is possible to write mathematics that contains mistakes and the writing of a mathematical implementation is just the first step in a process intended to increase confidence in a claim of correctness.

One of the questions that might be asked of a ‘mathematics implementation’ of a compiler is: does it faithfully interpret source code syntax/semantics according to the syntax/semantics specified in the appropriate language document?

Answering this question requires that the language syntax/semantics be specified in some mathematical notation that is amenable to formal analysis. Various researchers have created mathematical models for languages such as Ada, CHILL and C. However, these models are not recognized as being definitive, that status belongs to the corresponding ISO Standard written in English prose. The Modula-2 standard is specified using both English prose and equivalent mathematical notation with both having equal status as the definition of the language (any inconsistency between the two is decided why analyzing what behavior was intended); there were lots of plans to do stuff with this mathematics but the ISO language committee struggled just to produce a tool capable of printing the mathematics.

The developers of the Compcert system refer to it as a formally verified C compiler front-end when the language actually verified is called Clight, which they describe as a subset of the C language. This is very interesting work and I hope they continue to refine it and add support for more C-like constructs. But let’s be clear, the one thing missing from this project is any proof of a connection to the requirements contained in the C Standard.

I don’t know what it is about formal verification but those involved can at the same time be both very particular about the language they use in their mathematics and completely over the top in the claims they make about what their tools do. A speaker from Polyspace at one MISRA C conference claimed his tool could detect 100% of the coding guidelines specified in MISRA C, a surprising achievement for a runtime tool (as it was then) enforcing requirements mainly aimed at source code; I eventually got him to agree that the tool detected 100% of the constructs specified by the small subset of guidelines they had implemented.

I doubt that the Advertising Standard Authority would allow adverts containing the claims made by some formal verification advocates to appear in print or on TV; if soap manufacturers have to follow ASA rules then so should formal verification researchers.

Without a language specification written in a form amenable to mathematical analysis any claims of correctness have to be based on the traditional means of reading English prose very carefully and writing lots of tests to probe every obscure corner of the language specification. This was the approach used for the production of the Model Implementation of C, a system designed to detect all unspecified, implementation defined and undefined uses in C programs (it used a compiler, linker and interpreter). One measure of how well an implementor has studied the standard is how many faults they have discovered in it (some people claim this is a quality of standard issue, but the similar number of defects reported against the Ada and C Standards show that at least for Ada this is not true); here are some from the Model Implementation project.

Performance on independently written tests can be a good indicator of implementation correctness, depending on the quality of the tests. Both the Perennial and PlumHall C validation suites are of high quality, while suites such as the gcc testsuite are rather ad-hoc, have poor coverage and tend to be runtime oriented. The problem with high quality validation suites is that they cost enough money to put them out of reach of many research groups (I suspect another problem is that such groups don’t understand the benefits of using such suites or think they can do just as good a job in a few weeks).

Recently a new formal verification tool for C has appeared that performs all its verification checking at program runtime, i.e., after the user source has been translated to executable form. It is still very early days for kcc (they have yet to chose a name and the command used to invoke the translator is currently being used), they have an initial system up and running and are keen to continue improving it.

I am interested in the system because of what it might evolve into, including:

- a means of quickly checking the behavior of obscure bits of code (I get asked all sorts of weird questions and my brain is not always willing to switch to C language lawyer mode),

- a means of checking the consistency of the requirements in the C Standard, which will require another tool making use of the formalism built up by kcc,

- a tool which would help developers understand which parts of the C Standard they need to look at to understand some construct (the tool currently has a trace mode that needs lots of work).

SQL usage: schema evolution

My first serious involvement with SQL, about 15 years ago, was writing a parser for the grammar specified in the ISO SQL-92 Standard. One of the things that surprised me about SQL was how little source code was generally available (for testing) and the almost complete lack of any published papers on SQL usage (its always better to find out about where the pot-holes are from other peoples’ experience).

The source code availability surprie is largely answered by the very close coupling between source and data that occurs with SQL; most SQL source is closely tied to a database schema and unless you have a need to process exactly the same kind of data you are unlikely to have any interest having access to the corresponding SQL source. The growth in usage of MySQL means that these days it is much easier to get hold of large amounts of SQL (large is a relative term here, I suspect that there are probably many orders of magnitude fewer lines of SQL in existence than there is of other popular languages).

In my case I was fortunate in that NIST released their SQL validation suite for beta testing just as I started to test my parser (it had taken me a month to get the grammar into a manageable shape).

Published research on SQL usage continues to be thin on the ground and I was pleased to recently discover a paper combining empirical work on SQL usage with another rarely researched topic, declaration usage e.g., variables and types or in this case schema evolution (for instance, changes in the table columns over time).

The researchers only analyzed one database, the 171 releases of the schema used by Wikipedia between April 2003 and November 2007, but they also made their scripts available for download and hopefully the results of applying them to lots of other databases will be published.

Not being an experienced database person I don’t know how representative the Wikipedia figures are; the number of tables increased from 17 to 34 (100% increase) and the number of columns from 100 to 242 (142%). A factor of two increase sounds like a lot but I suspect that all but one these columns occupy a tiny fraction of the 14GB that is the current English Wikipedia.

Recent Comments