A signature for the “embeddedness” of source code and developers?

Patterns in the use of source code can tell us a lot about the people who wrote the code, the characteristics of the hardware it runs on and what the application is all about.

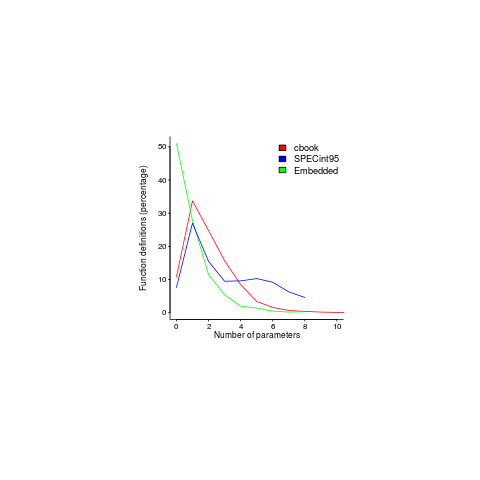

Often the pattern of usage needs a lot of work to understand and many remain completely baffling, but every now and again the forces driving a pattern leap off the page. One such pattern is visible in the plot below; data courtesy of Jacob Engblom and the cbook data is from my C book (assuming you know something about the nitty gritty of embedded software development). It shows the percentage of functions defined to have a given number of parameters:

Embedded software has to run in very constrained environments. The hardware is often mass produced and saving a penny per device can add up to big savings, so the cheapest processor is chosen and populated with the smallest possible memory; developers have to work with what they are given. Power consumption may be down below one watt, so clock speeds are closer to 1 MHz than 1 GHz.

Parameter passing is a relatively expensive operation and there are major savings, relatively speaking, to be had by using global variables. Experienced embedded developers know this and this plot is telling us that they are acting on this knowledge.

The following are two ways of interpreting the embedded data (I cannot think of any others that make sense):

- the time/resource critical functions use globals rather than parameters and all the other functions are written more or less the same as in a non-embedded environment. In statistical terms this behavior is described by a zero-inflated model,

- there is pressure on the developer to reduce the number of parameters in all function definitions.

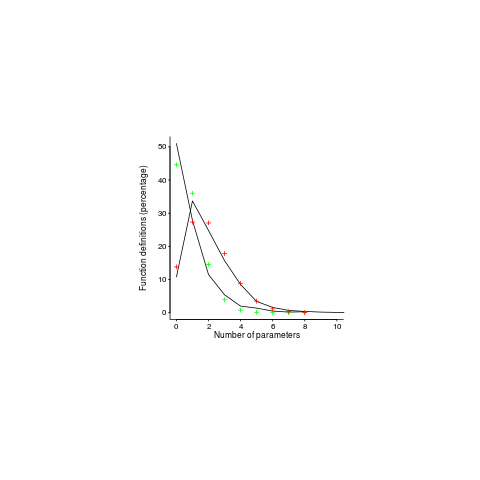

This data contains counts, so a Poisson distribution is the obvious candidate for our model.

My attempts to fit a zero-inflated model failed miserably (code+data). A basic Poisson distribution fitted everything reasonably well (let’s ignore that tiresome bump in the blue line); plus signs are the predictions made from each fitted model.

For desktop developers, the distribution of function definitions having a given number of parameters follows a Poisson distribution with a λ of 2, while for embedded developers λ is 0.8.

What about values of λ between 0.8 and 2; perhaps the λ of a project’s, or developer’s, code parameter count can be used as an indicator of ’embeddedness’?

What is needed to parameter count data from a range of 4-bit, 8-bit and 16-bit systems and measurements of developers who have been working in the field for, say, 4, 8, 16 years. Please let me know.

The data is from a Masters thesis written in 1999, is it still relevant today? Have modern companies become kinder to developers and stopped making their life so hard by saving pennies when building mass produced products; are modern low-power devices being used so values can be passed via parameters rather than via globals, or are they being used for applications where even less power is available?

One difference from 20 years ago is that embedded devices are more mainstream, easier to get hold of and sales opportunities abound. This availability creates an environment where developers with a desktop development mentality (which developers new to embedded always seem to have had) don’t get to learn about the overheads of parameter passing.

Have compilers gotten better at reducing the function parameter overhead? The most obvious optimization is inlining a function at the point of call. If the function is only called once, this works fine, with multiple calls the generated code can get larger (one of the things we are trying to avoid). I don’t have any reliable data on modern compiler performance int his area, but then I have not looked hard. Pointers to benchmarks welcome.

Does embedded software have any other signatures that differentiate it from desktop software (other than the obvious one of specifying address in definitions of global variables)? Suggestions welcome.

Recent Posts

Recent Comments

- Nemo on A distillation of Robert Glass’s lifetime experience

- Derek Jones on Some information on story point estimates for 16 projects

- Matt Doar on Some information on story point estimates for 16 projects

- Derek Jones on Agile and Waterfall as community norms

- Nemo on Agile and Waterfall as community norms

Tags

Archives

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- November 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

- July 2012

- June 2012

- May 2012

- April 2012

- March 2012

- February 2012

- January 2012

- December 2011

- November 2011

- October 2011

- September 2011

- August 2011

- July 2011

- June 2011

- May 2011

- April 2011

- March 2011

- February 2011

- January 2011

- December 2010

- November 2010

- October 2010

- September 2010

- August 2010

- July 2010

- June 2010

- May 2010

- April 2010

- March 2010

- February 2010

- January 2010

- December 2009

- November 2009

- October 2009

- September 2009

- August 2009

- July 2009

- June 2009

- May 2009

- April 2009

- March 2009

- February 2009

- January 2009

- December 2008

Dead giveaway: Is the code MISRAble? 😛

Embedded systems never seems to have enough CPU, memory, or other important resources. That leads to numerous custom optimizations, depending on the situation at hand.

Depending on the hardware architecture and function calling convention, parameter passing may have a large overhead, or it may be relatively “free”. How big is the register file? How many bytes to encode and CPU cycles to decode a passed parameter? In my limited experience, low parameter counts are due as much to whole-program optimization (global knowledge) as they are to function call overhead.

Expect to see a lot more bit twiddling, small look-up tables, gotos, etc. Also look for memory-mapped I/O, snippets of embedded assembly, etc. Many embedded applications contain what little there is of an OS…

Most embedded system guides strongly discourage memory management after startup. It is common that the entire memory map is set at compile/link time. Dynamic linking is rare. Look for big tables that serve as scratch space and object pools. Look for addresses that are re-used for different purposes during execution. Look for registers with fixed meaning. Depending on the architecture, special attention is often paid to align memory for vector units, to pack variables to avoid wasted space, etc. This information may be stored in a ROM, or it may be calculated during initialization before the main event loop starts.

Most embedded system guides strongly discourage unbounded loops, recursion, etc. This bounds both execution time and stack space. Multi-threading is rare and strictly managed. Expect an event loop, often constantly running, sometimes select()-style. Many loops check flags that are set by custom interrupt handlers.

Many optimizations performed for embedded systems are not readily apparent in the final product. They only stand out when compared to the original reference implementation. Algebraic simplifications, numeric approximations, statistical approximations, and re-ordering calculations to produce useful byproducts are all examples of this.

@D Herring

Register file? We are starting to talk rather up market cpus here.

All programs contain bit-twiddling, but if it is tucked away in a library to amount of source code involved will be small. The same goes for library calls, although in some cases the counts for embedded code will be zero rather than just very small.

Recursion is not that common outside of compilers and computer science stuff. Call-backs are common in user interface code.

Code size is an obvious differentiator, especially with 4-bit targets (of which I have no hands on experience).

16-bit integers and extensive use of 8-bit values for arithmetic operations is the one clear signal I could think of.