Archive

Learning R as a language

Books written to teach a general purpose programming language are usually organized according to the features of the language and examples often show how a particular language feature is interpreted by a compiler. Books about domain specific languages are usually organized in a way that makes sense in the corresponding application domain and examples usually illustrate how a particular domain problem can be solved using the language.

I have spent a lot of time using R over the last year and by dint of reading lots of R code and various introductions to the language I have managed to piece together a model of the language. I rarely have any trouble learning a general purpose language from its reference manual, but users of domain specific languages are rarely interested in language details and so these reference manuals are usually only intended to be read by people who know the language well (another learning problem is that domain specific languages often contain quirky features rarely seen in other languages; in the case of R I was not lucky enough to know enough other languages to cover all its quirky features).

I managed to one introduction to R written from the perspective of the programming language (and not the application domain): the original The Art of R Programming by Norman Matloff has been expanded and is now available as a book.

Summary. If you know another language and want to quickly learn about the languages features of R I recommend this book. I have not taught raw beginners for over 30 years and have no idea if this book would be of any use to them.

This book does not attempt to teach you to think ‘R’, it is not about the art of R programming. The value of this book is as a single source for a broad coverage of lots of language features explained using lots of examples. Yes, more time could have been spent on the organization and fixing inconsistencies in the layout; these are not show stoppers.

Some people might tell you to buy “Software for Data Analysis” by John Chambers. Don’t; if you are a fan of Finnegans Wake and are nostalgic for the mainframe world of the 1970s you might like to give it a go. (I think Bertrand Meyer’s “Object-oriented Software Construction” is still the best book about the design of a language).

Meanderings. What books are good examples of “The Art of …” writing for domain specific languages? Two that spring to mind are: “Algorithms in Snobol 4” by James Gimpel (still spotted from time to time on second hand book sites) and more recently “SQL For Smarties: Advanced SQL Programming” by Joe Celko.

Yes, I know that R is not really a domain specific language but a language that is primarily used in one domain. Frink is an example of a language containing a major behavior feature that is specific to its intended application domain. I cannot think of any major language feature of R that is specific to statistics.

Software memory management

I recently wrote about how computer memory capacity limits were a serious constraint for compiler writers. One technique used to increase the amount of memory available to a compiler (back in the days when pointers usually contained 16-bits) is software based paged memory management. Yes, compiler writers were generally willing to take the runtime performance hit to increase effectively accessible memory by around a factor of 10-25 (e.g., a 2 byte number used as an index into an array of 20 to 50 byte records).

The code to iterate over a data structure stored under the control of a software memory manager looks like the following (taken from a C to Pascal translator):

Var Flds : Sw_Ptr; (* in practice an integer *) T_Node : Sw_Node; (* in practice a pointer to a record *) Begin While Flds <> Sw_Nil Do (* Sw_Nil is the memory managers Nil value *) Begin Sw_Node_Ref(Cpswfile, Flds, T_Node, Mm_Readonly); If T_Node.Pn^.Node_Is<>N_Is_Field Then Verify_Error(Ve_Cputils, Ve_Scan_Fld); Scan(T_Node.Pn^.Field_Node.Ftype); Flds := T_Node.Pn^.Field_Node.Next; End; End; |

Where Sw_Node_Ref is a procedure in the memory management package that ensures the record denoted by Flds (whose value was obtained by a previous called to Sw_New_Node) is available in memory and returned in T_Node. Had Mm_ReadWrite rather than Mm_Readonly been specified the memory manager would assume that the record had been modified and when the page containing the record was swapped out of main memory it would write the contents of the page containing it to the swapfile (specified by the first argument, Cpswfile).

A call to Sw_Node_Ref only guarantees that the record is at the returned location until the next memory management procedure is called. This takes advantage of common usage which is: read a record, do something with its contents and then move on to the next one. The procedure Sw_Node_Ptr is available for when a record needs to be held in main memory across multiple Sw_ calls; this procedure locks a record in the limited capacity memory pool until explicitly freed (a Pascal style Mark/Release system was also available).

Multiple records were overlayed on a page (invariably 512 bytes) of storage. Some implementations used a fancy tool to create the overlay while others did it manually. The size of the pool in main memory used to hold swapped-in pages was specified when the memory manager was initialized; the maximum number of records that could be created by a call to Sw_New_Node was only limited by the maximum value of an integer and available disk space.

I learned about this implementation technique while on secondment at Intermetrics in the early 1980s, and they told me it came from the PQCC project of the mid 1970s. There is a paper in the Proceedings of the 1982 SIGPLAN symposium describing the system/library used by Intermetrics, which rambles on about nothing in particular and fails to say anything about software memory management (it is too useful an idea for a commercial company to tell anybody else); I don’t know of any other published description. Everybody I know who left Intermetrics to work on other compilers created their own implementation of a software memory management package.

Compiling to reduce the impact of soft errors on program output

Optimizing compilers have traditionally made code faster and smaller (sometimes a choice has to be made between faster/larger and slower/smaller). The huge growth in the use of battery power devices has created a new attribute for writers of optimizers to target, finding code sequences that minimise power consumption (I previously listed this as a major growth area in the next decade). Radiation (e.g., from cosmic rays) can cause a memory or processor bit to flip, known as a soft error, and I have recently been reading about how code can be optimized to reduce the probability that soft errors will alter the external behavior of a running program.

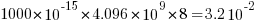

The soft error rate is usually quoted in FITs (Failure in Time), with 1 FIT corresponding to 1 error per  hours per megabit, or

hours per megabit, or  errors per bit-hour. A PC with 4 GB of DRAM (say 1000 FIT/Mb which increases with altitude and is 10 times greater in Denver, Colorado) has a MTBF (mean time between failure) of

errors per bit-hour. A PC with 4 GB of DRAM (say 1000 FIT/Mb which increases with altitude and is 10 times greater in Denver, Colorado) has a MTBF (mean time between failure) of  hours, around once every 33 hours. Calculating the FIT for processors is complicated.

hours, around once every 33 hours. Calculating the FIT for processors is complicated.

Uncorrected soft errors place a limit on the maximum number of computing nodes that can be usefully used by one application. At around 50,000 nodes, a system will be spending half its time saving checkpoints and restarting from previous checkpoints after an error occurs.

Why not rely on error correcting memory? Super computers containing terabytes are built containing error correcting memory, but this does not make the problem go away, it ‘only’ reduces it by around two orders of magnitude. Builders of commodity processors don’t use much error correction circuitry because it would increase costs/power consumption/etc for an increased level of reliability that the commodity market is not interested in; vendors of high-end processors add significant amounts of error correction circuitry.

Most of the compiler research I am aware of involves soft errors occurring on the processor, and this topic is discussed below; there has been some work on assigning variables deemed to be critical to a subset of memory that is protected with error correcting hardware. Pointers to other compiler research involving memory soft errors welcome.

A commonly used technique for handling hardware faults is redundancy, usually redundant hardware (e.g., three processors performing the same calculating and a majority vote used to decide which of the outputs to accept). Software only approaches include the compiler generating two or more independent machine code sequences for each source code sequence whose computed values are compared at various check points and running multiple copies of a program in different threads and comparing outputs. The

Shoestring compiler (based on llvm) takes a lightweight approach to redundancy by not duplicating those code sequences that are less affected by register bit flips (e.g., the value obtained from a bitwise AND that extracts 8 bits from a 32-bit register is 75% less likely to deliver an incorrect result than an operation that depends on all 32 bits).

The reliability of single ‘thread’ generated code can be improved by optimizing register lifetimes for this purpose. A value is loaded into a register and sometime later it is used one or more times. A soft error corrupting register contents after the last use of the value it contains has no impact on program execution, the soft error has to occur between the load and last use of the value for it to possibly influence program output. One group of researchers modified a compiler (Trimaran) to order register usage such that the average interval between load and last usage was reduced by 10%, compared to the default behavior.

Developers don’t have to wait for compiler or hardware support, they can improve reliability by using algorithms that are robust in the presence of ‘faulty’ hardware. For instance, the traditional algorithms for two-process mutual exclusion are not fault-tolerant; a fault-tolerant mutual exclusion algorithm using  variables, where a single fault may occur in up to

variables, where a single fault may occur in up to  variables is available.

variables is available.

Abramowitz and Stegun mark II

Like me I imagine many readers have owned a copy of Handbook of Mathematical Functions (or to use its more well known name “Abramowitz and Stegun”, after its two editors). Some time ago I heard that an updated handbook was being created, time passed and last year the “NIST Handbook of Mathematical Functions” was published, the companion web site has been slowing evolving over the years.

I did not hear anybody raving about the updated handbook and it was priced at more than twice that of the original (whose copyright was in the public domain and thus open to Dover to print a low cost edition {and others to make available online}, NIST are claiming copyright over the updated version which is published by Cambridge University press), so did not rush out to buy a copy.

I recently placed a large order with Amazon US and was tempted by a temporary price reduction to buy the NIST handbook (tip for Europeans: it is often possible to make big savings by ordering from amazon.com, which seems to ship from Germany and arrives a few days later than orders placed with amazon.co.uk),

Summary recommendation:

- Should somebody who has the original handbook buy the update? Probably not.

- If somebody had a choice of either, which should they pick? I would go for the original handbook.

The major difference between the handbooks are that the substantial number of precomputed tables of values of functions are not included in the update and there are 12 new chapters covering subjects not included (or not given much prominence) in the original. A not so important difference is the switch from black&white to color in the update, this works well in the online version (on the CD shipped with the book) but works poorly in print form; if a book is intended to be printed its color usage needs to be optimized for reflected light which has different characteristics than the transmitted light of a display..

The argument for removing the tables of values is that software packages can now be used to obtain these. In practice I rarely use the tables of values for this purpose; I use the tables to find the range of function input values that will generate a given rang of output values, or to see how output values change with changes in input values. For me omitting these tables in the update was a big mistake; ok the number of significant digits could have been reduced (to say five) to save some paper. The new chapters often contain various tables of numbers, but they are not extensive, but a conscious decisions seems to have been made to remove tables from existing chapters.

From a user interface point of view I don’t like the glossy paper used in the update, presumably caused by the switch to color which does not work well in the printed version; the angle of the page has to be constantly shifted to reduce glare from overhead lights and the handbook is noticeably heavier even though the page count is down by around 20% (886 vs 1030, excluding index which is substantially improved in the update).

The original has lots of tables, matte pages that don’t glare and is surprisingly light for such a big book. Time will tell whether I find the new chapters useful.

Recent Comments